Artificial intelligence is changing nearly the whole lot—business, training, healthcare, even how governments make decisions. but there is a developing idea that regularly receives left out in pleasure: AI transformation is a problem of governance.

In easy phrases, the largest assignment of AI is not simply building smarter structures. it’s far approximately controlling, regulating, and guiding them responsibly so that they do now not create damage, inequality, or confusion in society.

Let’s break this down in a practical way.

AI Transformation – What Are We Really Talking About?

AI transformation simply means AI slowly becoming part of everyday systems.

Not in a dramatic movie way. More like:

- Banks using AI to approve loans

- Companies using AI to screen job applicants

- Apps deciding what content you see

- Hospitals using AI to assist diagnosis

- Governments experimenting with predictive systems

It’s not one change. It’s a chain reaction happening across industries.

And here’s the thing… once AI enters decision-making, it stops being just a “tool.” It becomes part of authority.

That’s where governance becomes unavoidable.

Why Governance Becomes the Real Issue

At first, AI feels like a technical challenge. Better models, better data, more computing power. Simple, right?

But that’s not where most of the real-world problems appear.

The actual issues show up in questions like:

- Who is responsible when AI makes a wrong decision?

- Why did the system reject this person?

- Can we trust the data behind it?

- Who controls the algorithm?

These are not engineering problems. These are governance problems.

And that’s why the phrase ai transformation is a problem of governance x.com often appears in discussions online. People are basically pointing out the same concern from different angles: control is lagging behind capability.

Even on ai transformation is a problem of governance twitter discussions, you’ll see debates about fairness, job loss, surveillance, and transparency. Different opinions, same worry underneath.

The Main Governance Problems in AI

Permit’s wreck it down a bit more certainly.

1. No Clear Responsibility

If an AI system makes a harmful decision, things get blurry fast.

Is it:

- The developer?

- The company using it?

- The data provider?

- Or the algorithm itself?

Most systems don’t clearly define this, and that’s a problem.

2. Bias That No One Notices Early

AI learns from data. And data comes from human society. So if society has bias, AI quietly learns it too.

That can lead to:

- Unfair hiring decisions

- Skewed loan approvals

- Biased recommendations in content systems

The problem is, it often looks “neutral” on the surface.

3. Data Privacy Questions

AI systems need huge amounts of data.

But then questions come up:

- Where did that data come from?

- Did users actually agree?

- Is it being reused somewhere else?

Without governance, data becomes something people lose control over without even realizing it.

4. Rules Are Too Slow

Technology moves fast. Laws don’t.

So what happens is:

- AI systems evolve quickly

- Regulations arrive late

- Companies operate in a grey zone

That gap is where most governance problems grow.

5. Power Concentration

A small number of companies control most advanced AI systems.

That leads to concerns like:

- Too much influence in one place

- Lack of competition

- Limited transparency

And slowly, AI stops being just a tool and starts becoming a power structure.

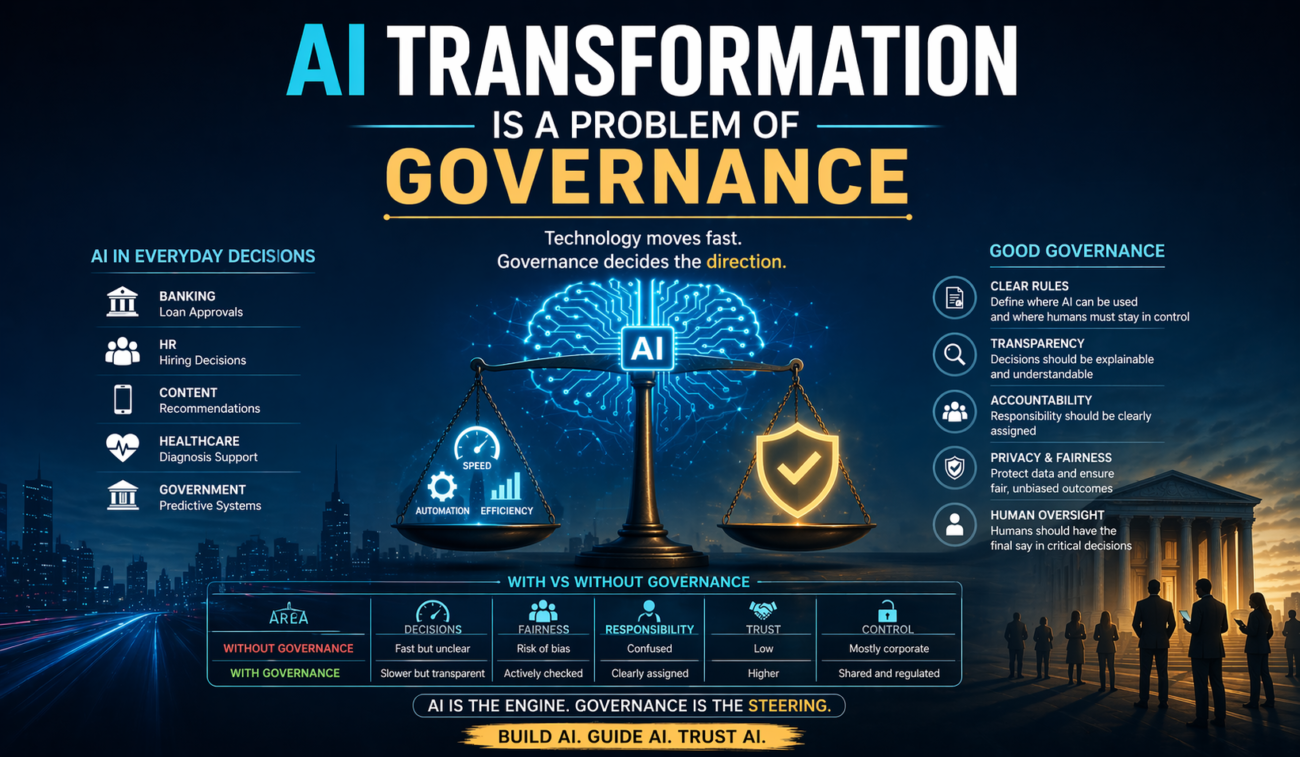

What Good AI Governance Actually Looks Like

Governance sounds abstract, but it’s actually practical.

It just means putting structure around how AI is used.

Here are some simple but important steps:

Clear Rules Before Use

Organizations should clearly define:

- Where AI can be used

- Where humans must stay in control

- What is off-limits

Transparency in Decisions

If an AI system makes a decision, there should be some explanation behind it.

Not necessarily technical jargon. Just understandable reasoning.

Regular Checking and Audits

AI should not be “set and forget.”

It needs:

- Testing

- Monitoring

- Updates

Because data changes. Society changes. Systems drift.

Human Oversight (Very Important)

Especially in sensitive areas like:

- Healthcare

- Justice systems

- Hiring decisions

AI can help, but humans should still have the very last say.

Simple Comparison: With vs Without Governance

| Area | Without Governance | With Governance |

|---|---|---|

| Decisions | Fast but unclear | Slower but transparent |

| Fairness | Risk of bias | Actively checked |

| Responsibility | Confused | Clearly assigned |

| Trust | Low | Higher |

| Control | Mostly corporate | Shared and regulated |

It’s not about stopping AI. It’s about guiding it properly.

The Social Media Side of the Debate

If you scroll through platforms like x.com (Twitter), you’ll notice something interesting.

People are not just excited about AI. They are also unsure about it.

You’ll see posts asking:

- “Is AI replacing human jobs too fast?”

- “Who is controlling these algorithms?”

- “Can we trust AI decisions?”

These aren’t technical questions. They’re governance questions in disguise.

And that’s exactly the point.

AI transformation is not just happening in labs. It’s happening in public conversation too.

A Simple Way to Think About It

Maybe this helps:

AI is like a powerful engine.

But governance is the steering system.

Without steering, speed doesn’t matter much. In fact, it can make things worse.

That’s really the core idea behind the statement ai transformation is a problem of governance.

FAQs

1. Why is AI transformation considered a governance issue?

Because the main dangers—bias, responsibility, privacy—are not technical however related to manipulate and law.

2. What does governance mean in AI?

It way guidelines, guidelines, and structures that make certain AI is used ethically, accurately, and fairly.

3. How does social media like x.com relate to AI governance?

Plateforms like x.com (Twitter) amplify public concerns and debates approximately AI impact, influencing coverage discussions.

4. Can AI exist without governance?

Technically sure, but it would lead to unsafe, biased, and out of control structures, that can damage society.

5. Who is responsible for AI governance?

Governments, tech companies, and international corporations all percentage responsibility.

Conclusion

AI is not always slowing down. It’s becoming greater commonplace each day in systems we use, alternatives we rely on, and platforms we accept as true with.

However the real challenge isn’t always simply building it.

It’s dealing with it.

Due to the fact without governance, AI becomes unpredictable. With governance, it becomes beneficial, fair, and more stable.

So sure, AI transformation is not just a tech story. It’s a governance tale. And we’re still writing the guidelines as we cross.